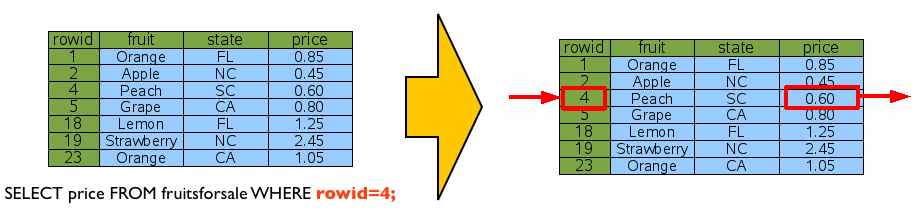

If you estimate that the first method is faster, i.e., that so few rows match the level filter that fetching and sorting the remaining rows is faster than ignoring the non-matching rows while scanning through the table, then you can force the database to use the index with the INDEXED BY clause: SELECT * The database estimates that the second method is faster. Scan through all rows of the table (which are already stored in rowid order), and return any where the level column matches. Then the first 100 of those can be returned. Search the first entry with level>20 in the level_idx index, and then scan through all the following entries and fetch each corresponding row from the table.īecause the index entries are not stored in rowid order, all the results must then be sorted. Copying the archive locally (to, say, make a replica and strip out an index before compressing) is a very slow operation on the archive machine.There are two possible methods to execute this query: The only work I do on the archival dataset is add records and verify that the data has been properly backed up, as described above. Because I occasionally share the entire archive with other researchers, sqlite is a convenient container, but I don't index because I want the files to be small when I back them up and ship them around. The sqlite database is on a very slow machine with tons of disk space, and holds the total archive going back several years. I'm less worried that two corresponding records would contain different values).Ī few more probably irrelevant details: The postgres production system is on a very space-constrained machine, and can only hold about 6 months of data. Is there a better way than counting to validate that records in a specified date range stored in databases on two different systems, one in postgres, one in sqlite, contain the same data? (I presume they do, but I'm a paranoid sort, especially around the idea that my syncing process might somehow drop records.Is there a less janky way to leverage the fact that my data is sorted to get at least some of the benefits of indexing without rolling my own?.If I do decide to insert data out of date order, how can I sort the data and reset the rowids to account for that?.What events would cause the rowid of a given record to change?.Will deleting records create "holes" that sqlite will attempt to "fill in" with subsequent insertions?.Can I therefore implement my own binary search to rapidly find a record with a particular ts? (or use max(rowid) to find the maximum timestamp)?.Is rowid maintained in "insertion order" such that if my data is inserted in order of timestamp, I can assume that a higher rowid will never have a lower timestamp?.Doing a select count(*) where ts > nnn is slow on the archive (as expected), but it occurs to me that I might be able to use rowid to do a binary search for the first record where ts = nnn and then (perhaps) subtract rowids to get my count of records much faster than the ordinary select would, kind of like a home grown index (or, at very least, allow me to restrict the part of the database that needs to be searched by adding where rowid > mmm and rowid < ooo to the query.

To validate that the archive is tracking with my production data, I perform a "checksum" of sorts, counting the number of records in a given date range, and comparing the archive to the production dataset. Multiple records can have the same timestamp, but the timestamp will only increase over time. Therefore, the sqlite db is unindexed.Įvery record has a timestamp (stored in epoch ms), and data is never inserted out of date order. Generally speaking, archive size is more important than archive performance.

I am using this as archival backup for working data on a different system (in postgres).

I have a large and growing table I'm maintaining in sqlite (250M+ rows).

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed